The Psychology of

Visual Attention

Which stimuli capture attention and why?

Introduction

Capturing attention used to be easy.

How it was:

How it is today:

Today, it’s pretty tough.

So I read hundreds of journal articles to answer the question: What captures attention?

This guide explains the 9 stimuli that capture attention.

Why Do Certain Things Capture Attention?

Three key factors:

1) We're surrounded by a lot of stuff

We can’t see everything, so we use selective attention: We only perceive a fraction of stimuli that enter our consciousness (Moran & Desimone, 1985).

In fact, that’s the mechanism behind subconscious influence. Many stimuli enter our brain without being detected consciously — yet they’re still in our brain, influencing our perception and behavior.

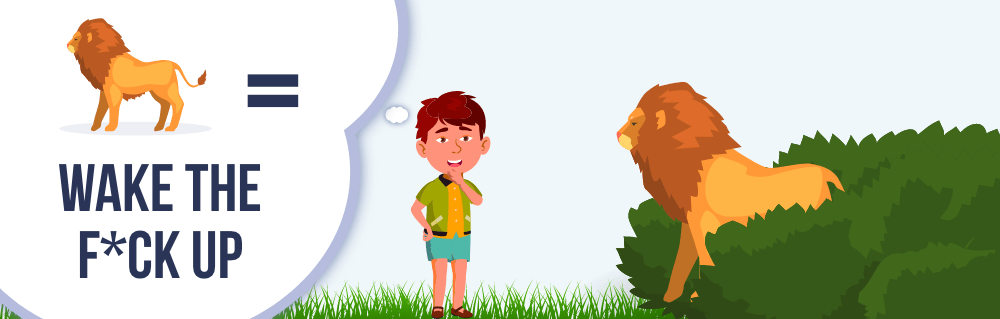

2) Our ancestors needed to see important stimuli quickly

In order to survive, our ancestors needed to see life-threatening stimuli.

…reproductive potential of individuals, therefore, was predicated on the ability to efficiently locate critically important events in the surroundings. (Öhman, Flykt, & Esteves, 2001, p. 466)

And that’s what happened. Our ancestors developed brain regions that monitored the surrounding environment for critical stimuli:

…there should be systems that incidentally scan the environment for opportunities and dangers; when there are sufficient cues that a more pressing adaptive problem is at hand—an angry antagonist, a stalking predator, a mating opportunity—this should trigger an interrupt circuit on volitional attention (Cosmides & Tooby, 2013, p. 205)

Our brain alerted us whenever it detected a threat.

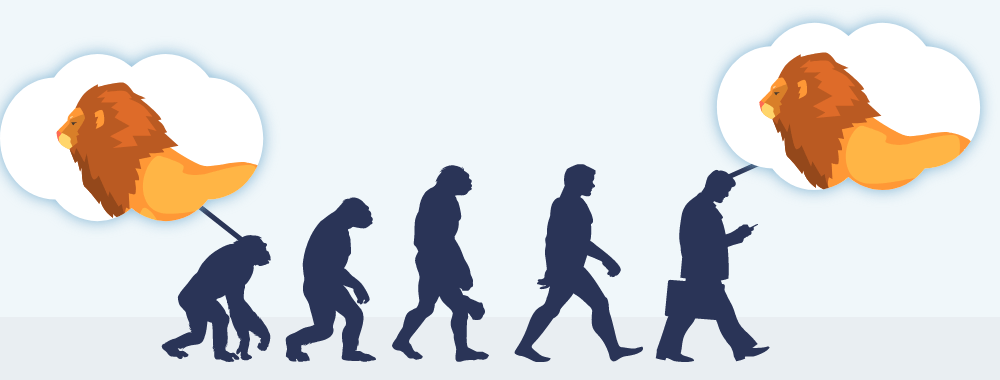

3) Modern humans inherited those mechanisms

Today, our brain alerts us toward threats.

But here’s the funny thing. We developed these mechanisms millions of years ago. Many stimuli that were considered “life-threatening” are less severe today.

Consider vehicles and animals.

Today, vehicles threaten our survival more than animals. But we’re wired to notice animals more than vehicles.

We are more likely to fear events and situations that provided threats to the survival of our ancestors, such as potentially deadly predators, heights, and wide open spaces, than to fear the most frequently encountered potentially deadly objects in our contemporary environment (Öhman & Mineka, 2001, p. 483)

Humans might detect “vehicle” features thousands of years into the future, but hopefully we’ll be teleporting by then.

Here’s the point: We’re wired to notice stimuli that helped our ancestors survive. Even today. Even with stimuli that seem harmless. If you want to capture attention, you need to display stimuli that threatened the survival of our ancestors.

1. Salience

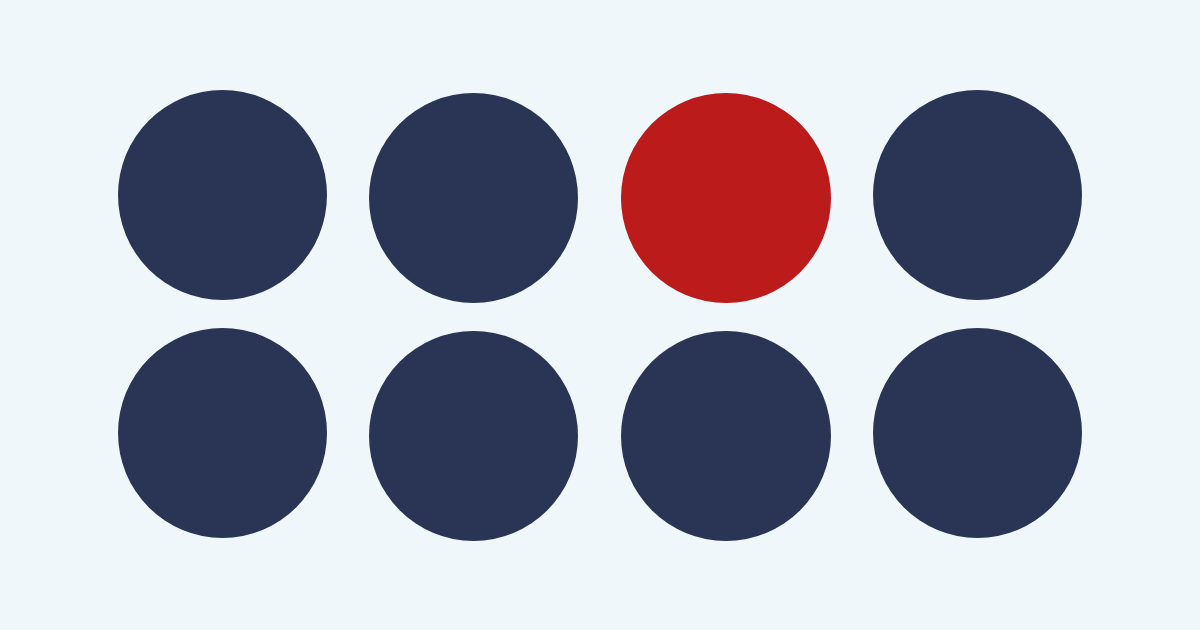

Color

Color might be the most salient dimension (Milosavljevic & Cerf 2008).

In particular, females are more likely to notice red stimuli because they were foragers. They needed to detect red stimuli among green plants (Regan et al., 2001).

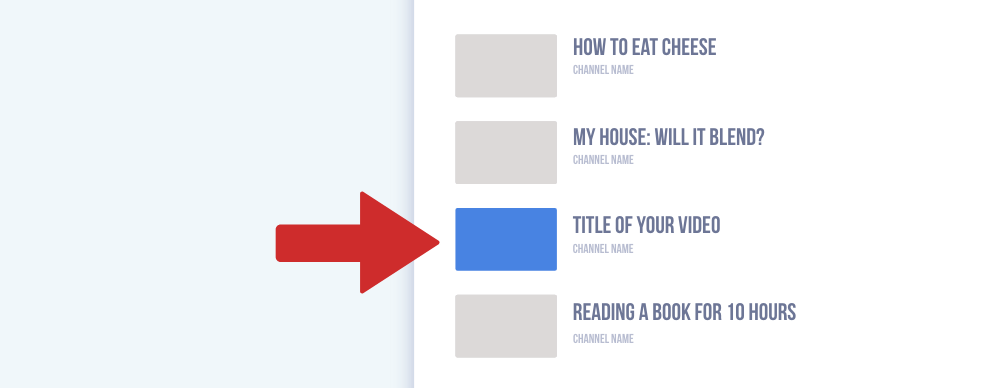

Want people to notice your YouTube video? Look at surrounding thumbnails. Which color are they? Choose a contrasting color that stands out.

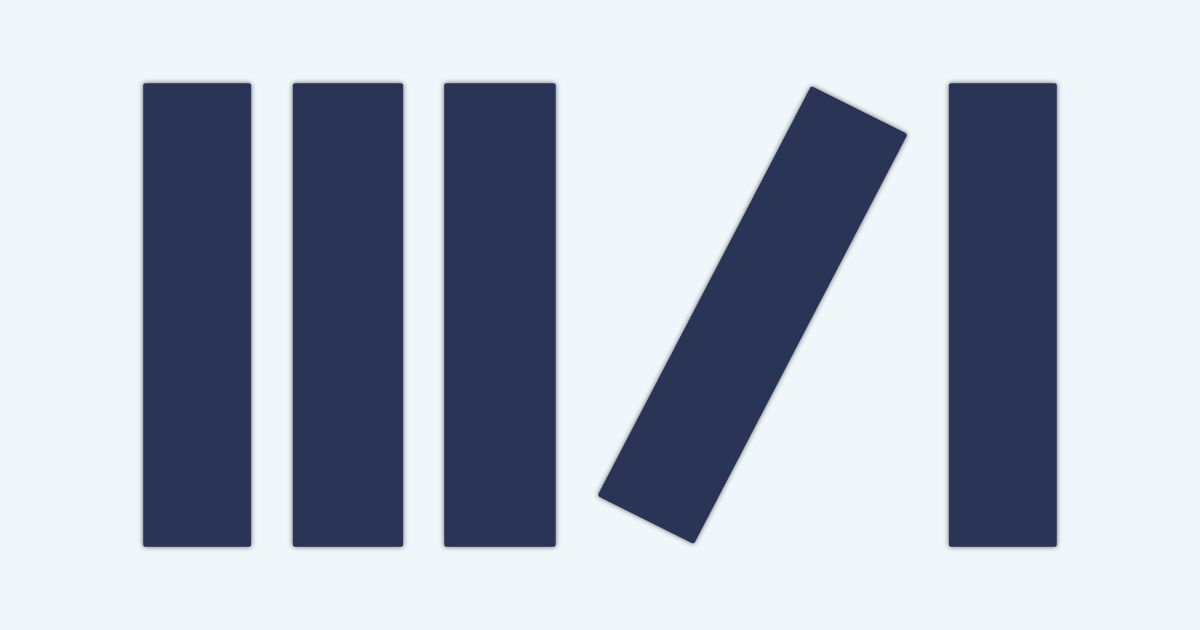

Orientation

We also notice misalignment (Treisman & Gormican, 1988).

Want people to notice your Facebook post? Add white rectangles at the top and bottom so that it appears tilted.

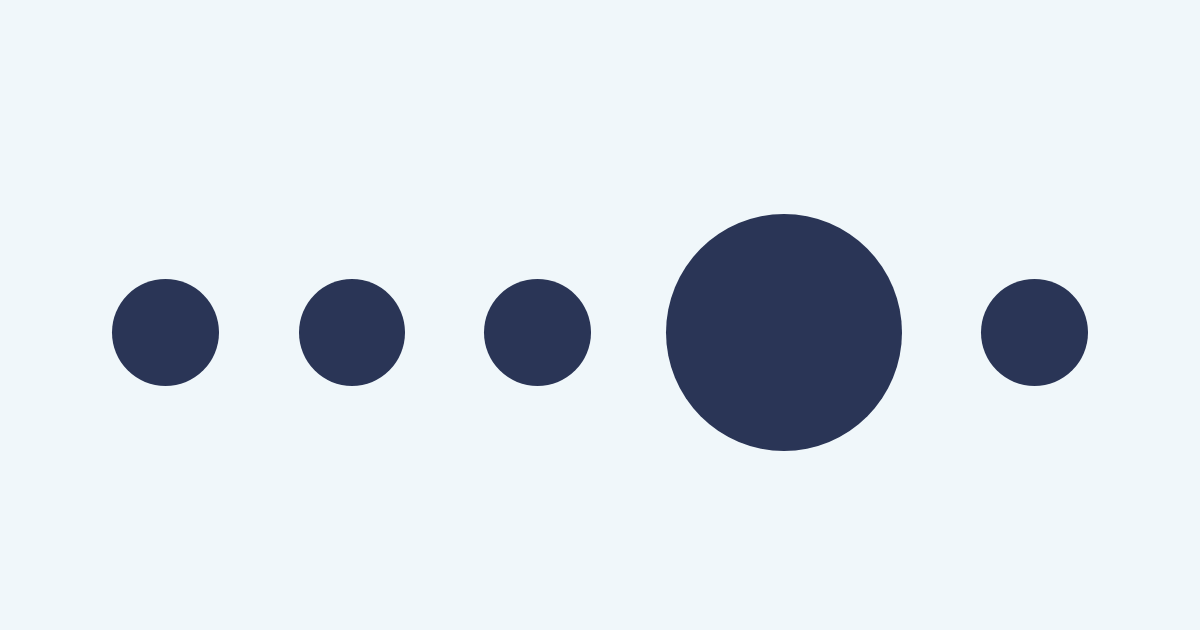

Size

Contrasting size captures attention (Huang & Pashler, 2005).

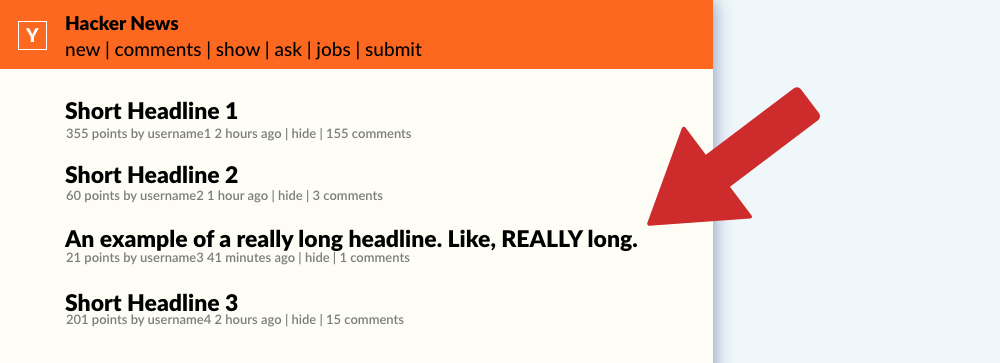

Submitting an article to Reddit or Hacker News? Check the length of recent submissions — are they short or long?

- Short? Write a long headline.

- Long? Write a short headline.

2. Motion

Motion Onset

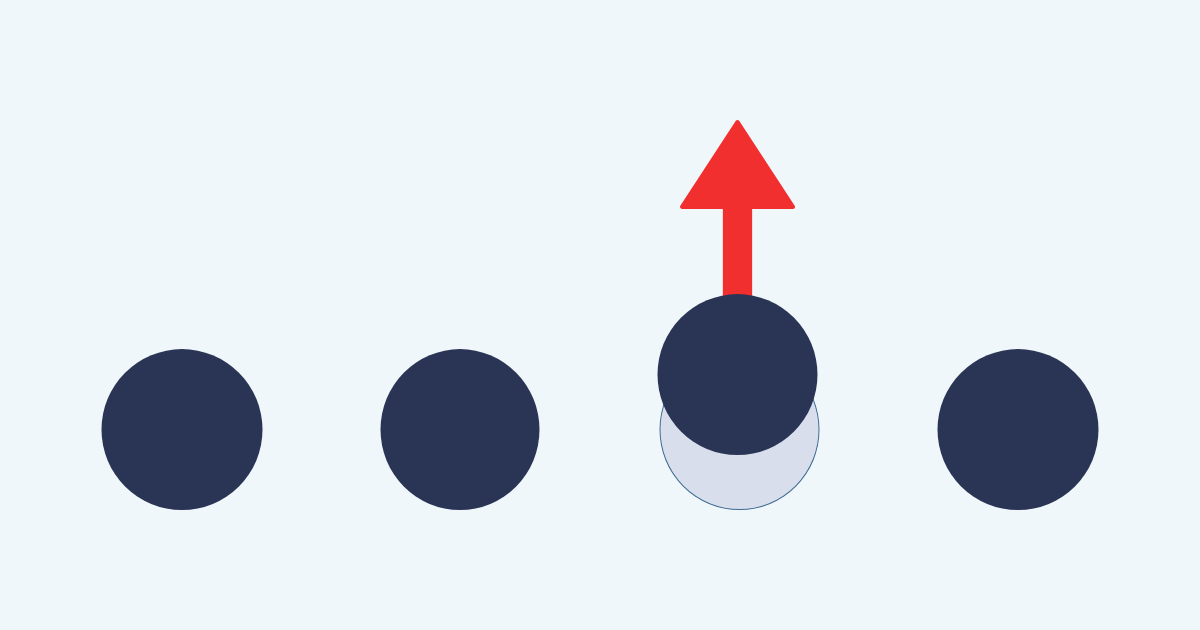

Motion onsets are changes from stillness to movement (Abrams & Christ, 2003).

Want website visitors to notice your button? Add a motion onset, like pulsing.

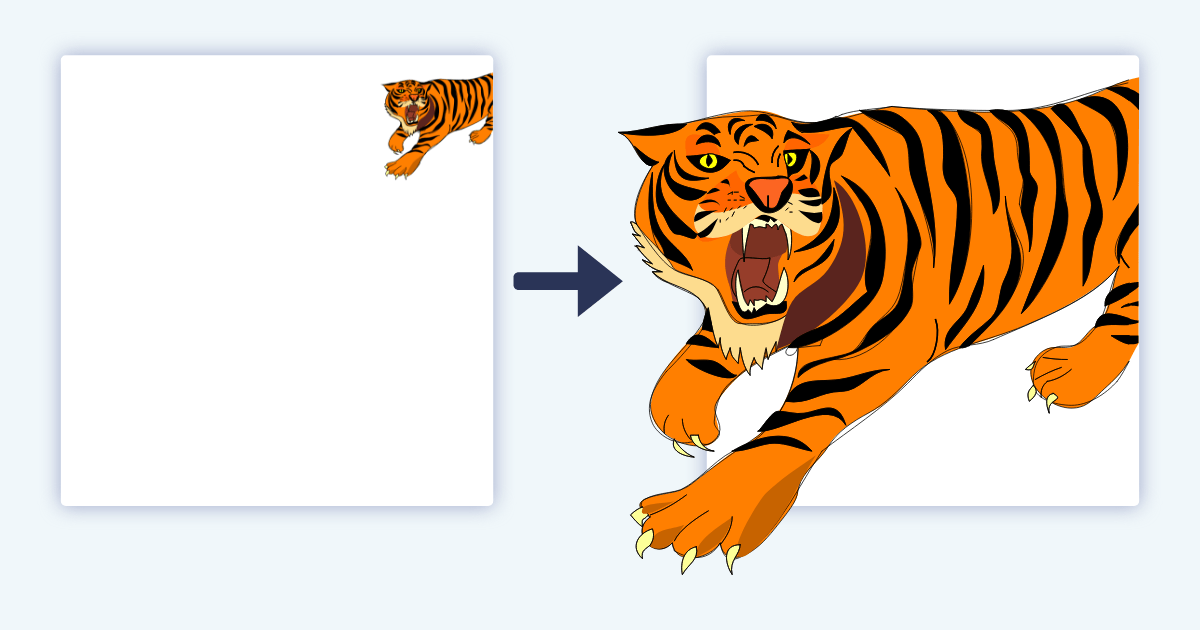

Looming Motion

Looming motion occurs when stimuli get larger:

...looming objects are more likely than receding objects to require an immediate reaction, we speculated that the potential behavioral urgency of a stimulus might contribute to whether or not it captures attention. (Franconeri & Simons, 2005, p. 962)

Perhaps you could start a video by zooming inward — this looming motion is more likely to capture and sustain attention.

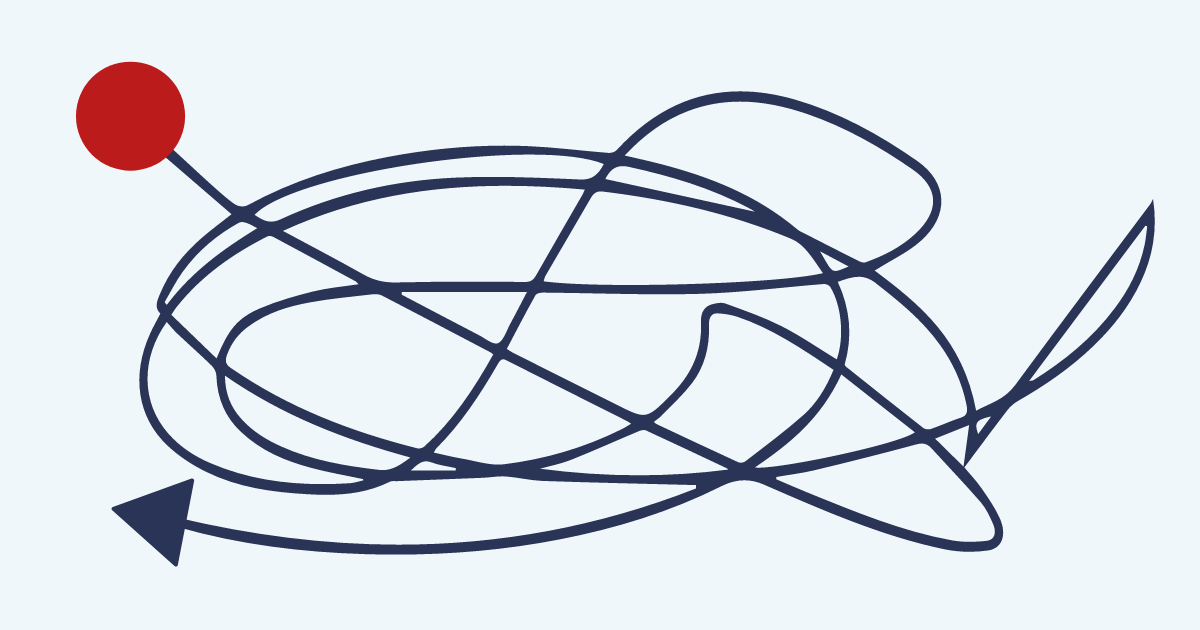

Animate Motion

Animate motion is unpredictable motion (Pratt, Radulescu, Guo, & Abrams, 2010). If a predator attacked without warning, we needed to be prepared.

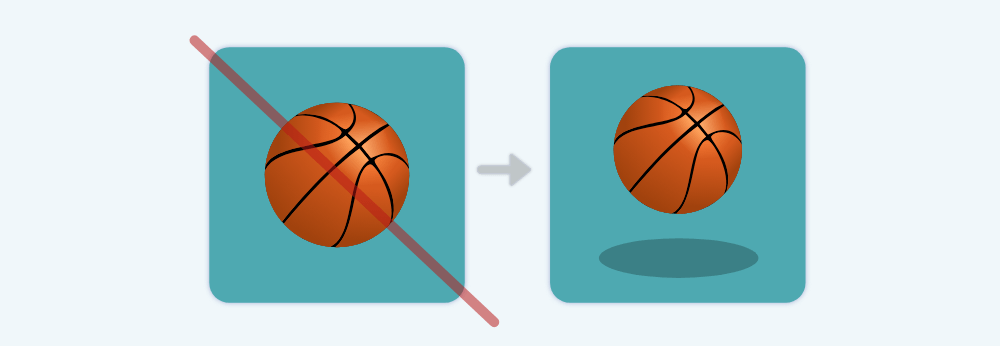

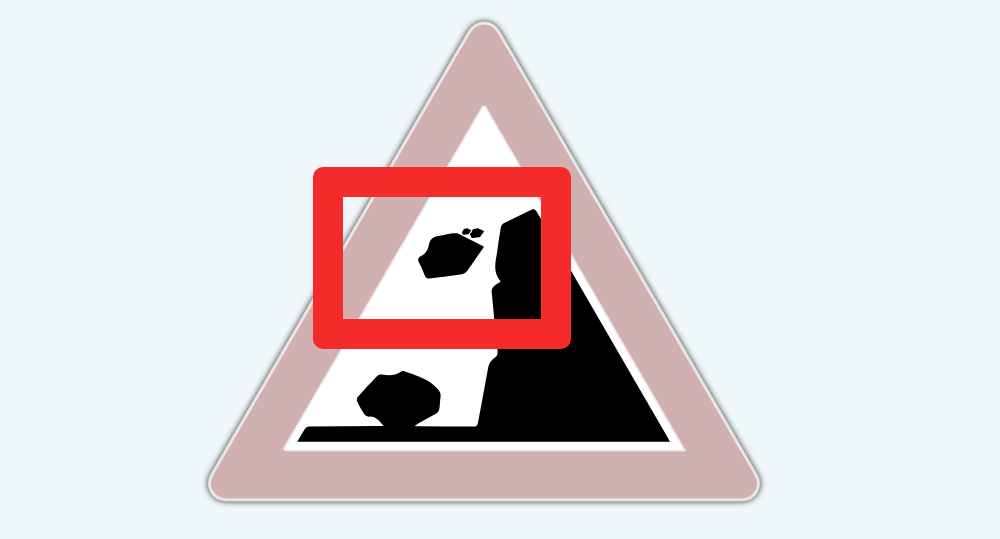

Dynamic Imagery

Motion doesn’t need literal movement. Static images capture attention when they depict motion (Cian, Krishna, & Elder, 2015).

Designing an app thumbnail? Add motion.

Designing a traffic sign? Add motion.

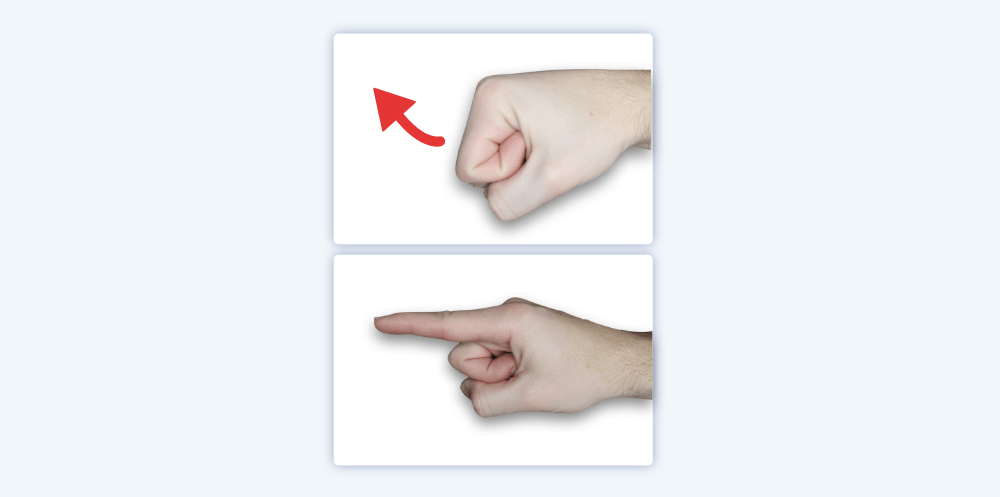

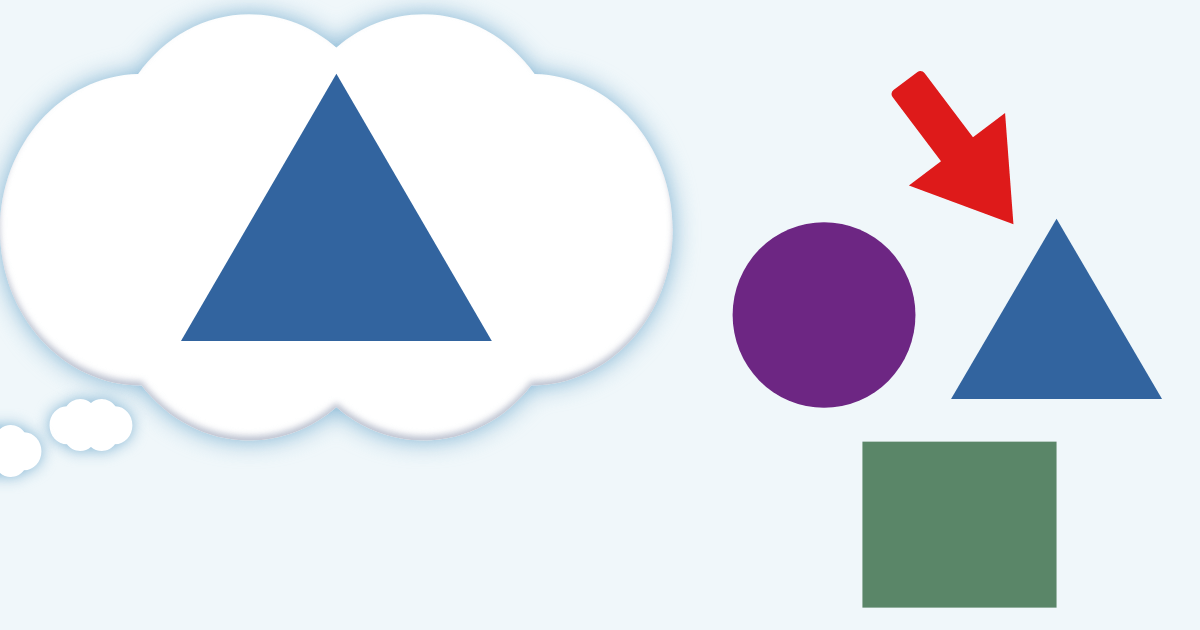

Motion Capacity

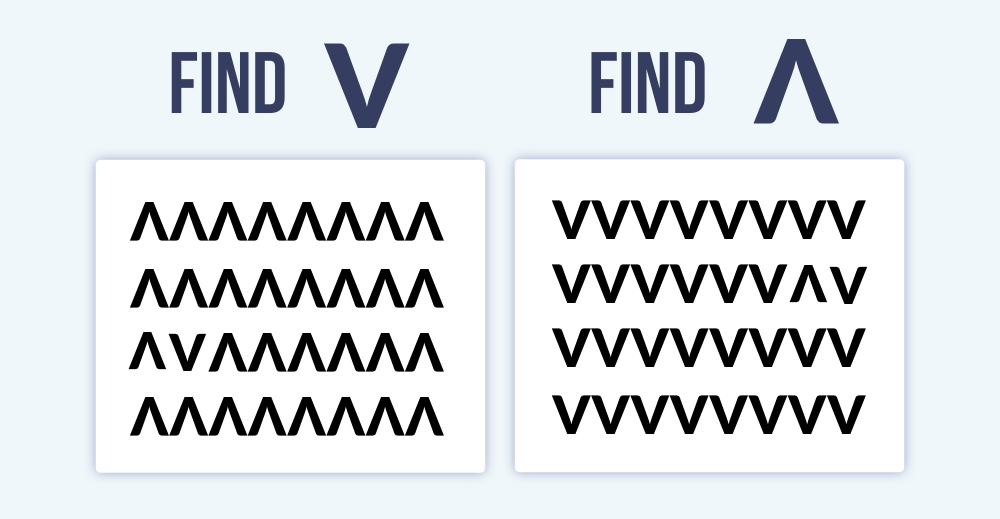

People can find a “V” faster than a “Λ” shape:

In that study, researchers argued that we’re more likely to notice a V-shape because it resembles the eyebrows of an angry person (Larson, Aronoff, & Stearns, 2007). Supposedly, this ability helped us survive.

Perhaps...but I’m skeptical. It took me dozens of photos to capture the downward shape in my eyebrows above. Plus, the idea of “universal” emotions and facial expressions has many theoretical issues, which are beyond the scope of this guide.

Instead, I suspect a more plausible explanation: motion capacity.

A V-shape can easily move — it tilts from side to side. However, a Λ-shape remains stable. Thus, we’re more likely to notice stimuli that possess the capacity for motion. This ability helped our ancestors survive.

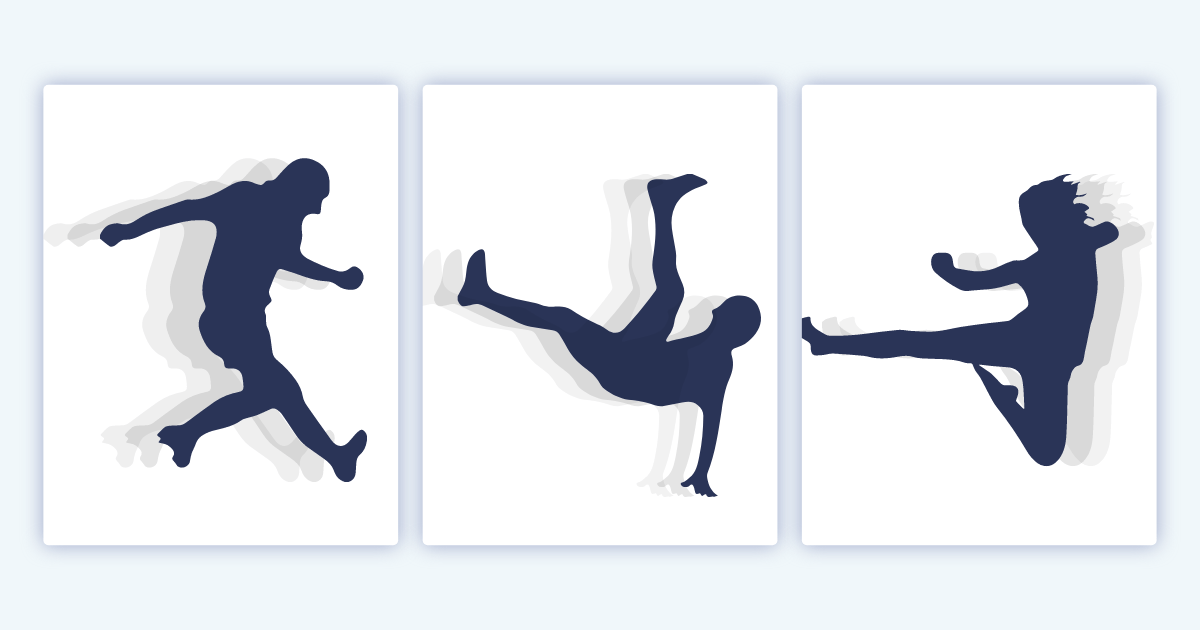

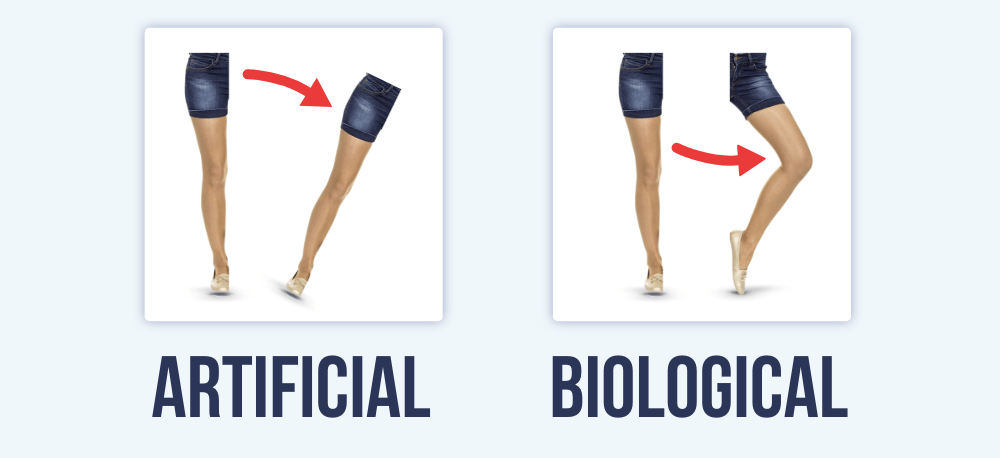

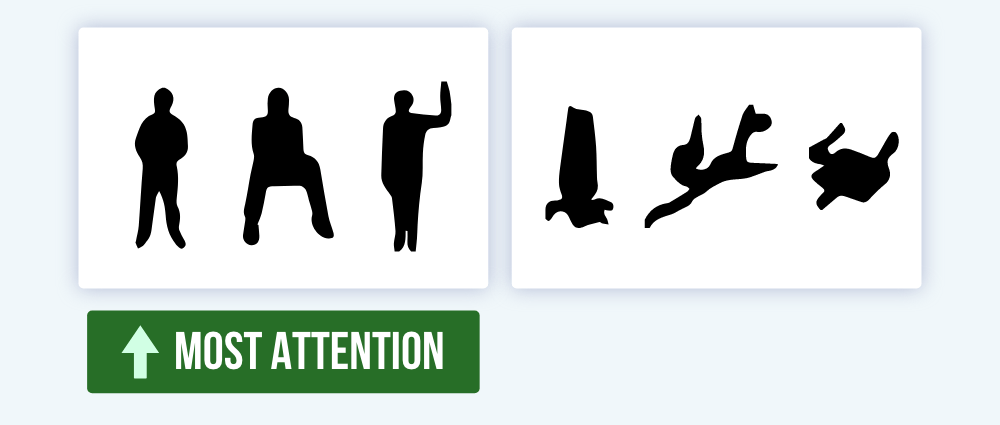

Biological Motion

Finally, humans are wired to detect motion of our species (Troje, 2008).

…the right pSTS, revealed an enhanced response to human motion relative to dog motion. This finding demonstrates that the pSTS response is sensitive to the social relevance of a biological motion stimulus. (Kaiser, Shiffrar, & Pelphrey, 2012, p. 1)

Biological motion requires natural body movements. For example, newly hatched chicks prefer natural body movements of a hen, rather than an artificially rotating hen (Vallortigara, Regolin, & Marconato, 2005).

Humans are the same. We notice biological motion:

3. Agents

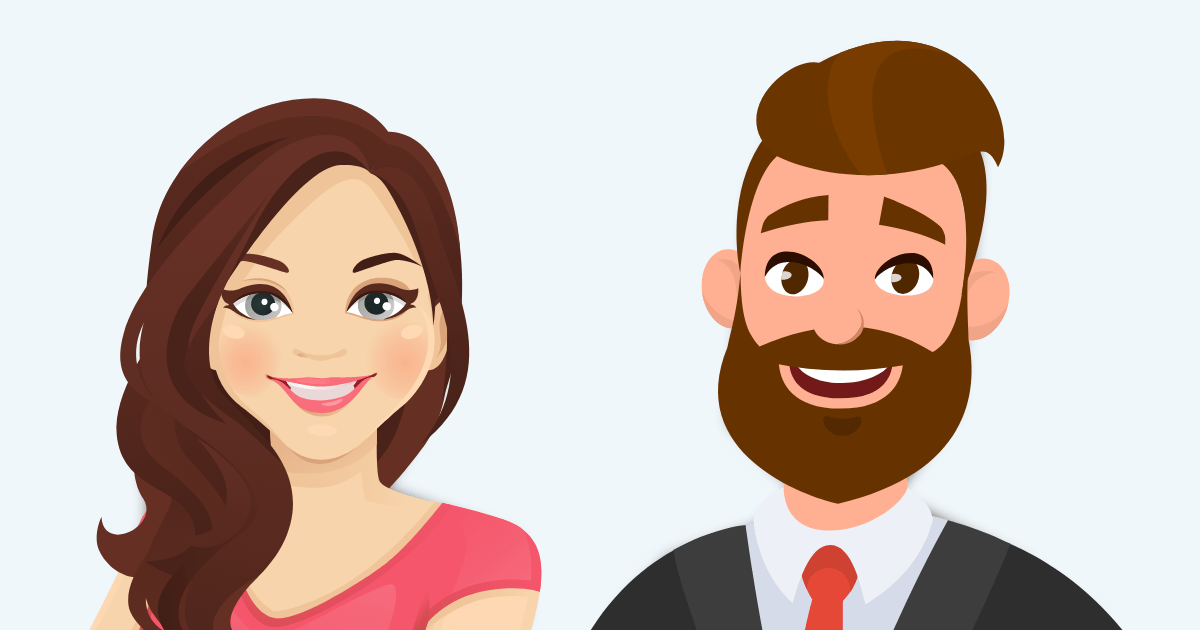

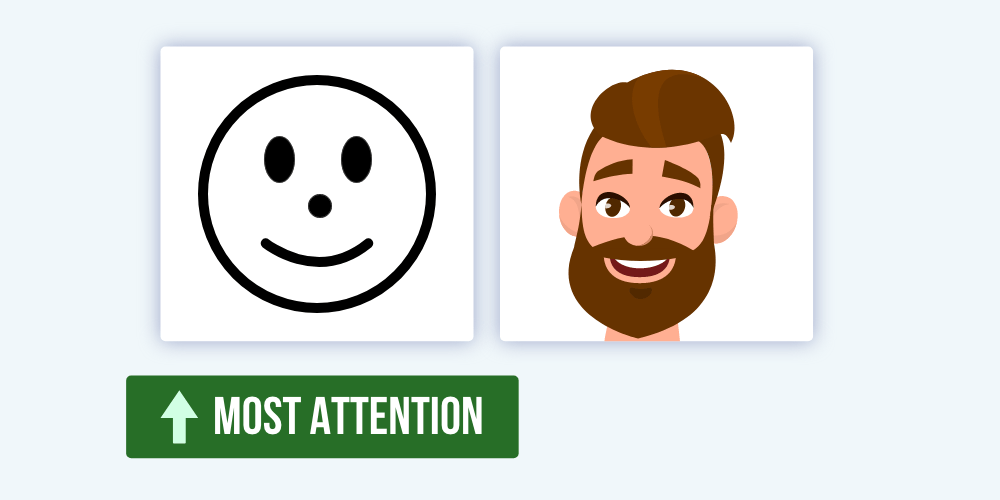

Faces

Images of people activate a designated region of our brain, called the superior temporal sulcus (STS; Allison, Puce, & McCarthy, 2000).

In particular, faces activate the fusiform gyrus (Puce et al., 1996).

Researchers have found that people detect changes in faces more easily than in other objects (e.g., clothes; Ro, Russell, & Lavie, 2001).

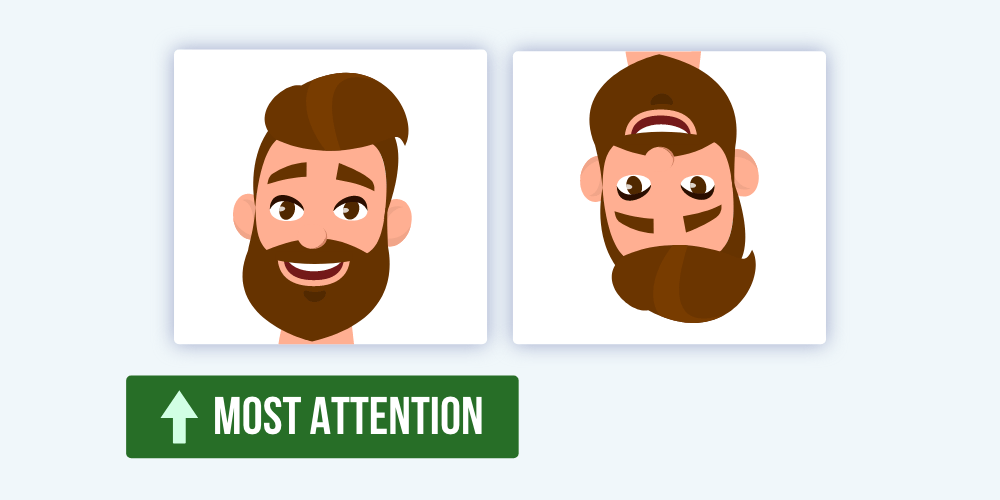

However, faces need to be upright (Eastwood, Smilek, & Merikle, 2003). Thanks to the face inversion effect, we’re slower to detect inverted faces (Epstein et al., 2006).

Also, here’s a question. What makes a face...well...a face? When does our brain stop recognizing a face?

Turns out, our brain looks for underlying geometric patterns:

...our first study indicated that the overall geometric configuration provided by the facial features, rather than individual features, was how a culture defined the emotional representation. (Aronoff, 2006, p. 85)

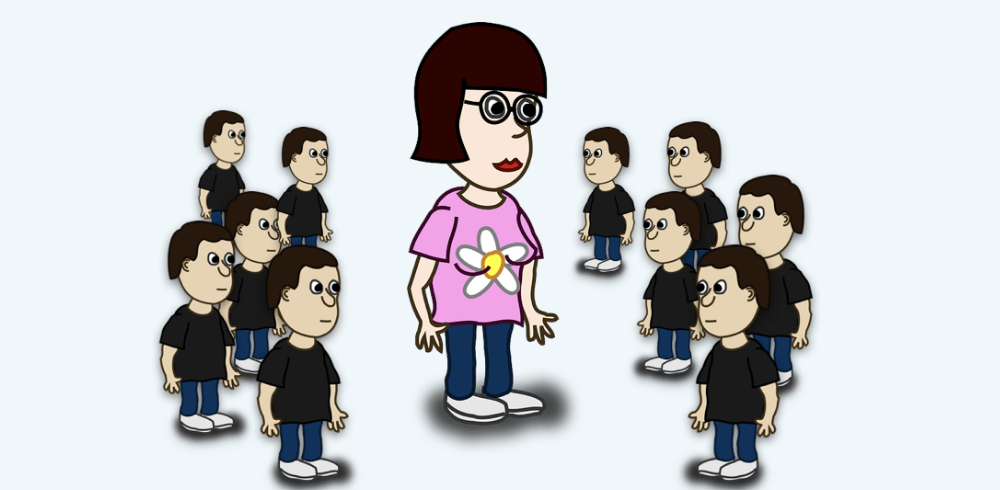

Ironically, schematic faces can be more attention-grabbing than realistic faces because they are built with geometric shapes.

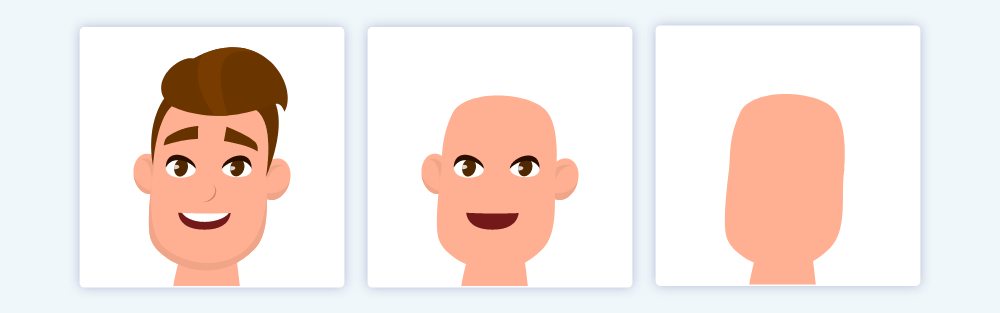

Bodies

Our brain can also detect the human body:

...a distinct cortical region in humans that responds selectively to images of the human body, as compared with a wide range of control stimuli. This region was found in the lateral occipitotemporal cortex (Downing, Jiang, Shuman, & Kanwisher, 2001, p. 2470)

In one study, blobs captured more attention when they resembled a human body (Downing et al., 2004).

However, we allocate more attention when faces and bodies are present (Bindemann et al., 2010).

Body Parts

Finally, our brain regions that detect individual body parts (Peelen & Downing, 2007)

For example, researchers found a direct relationship between brain activation and hand realism: Activation was greater with realistic hands (Desimone et al., 1984).

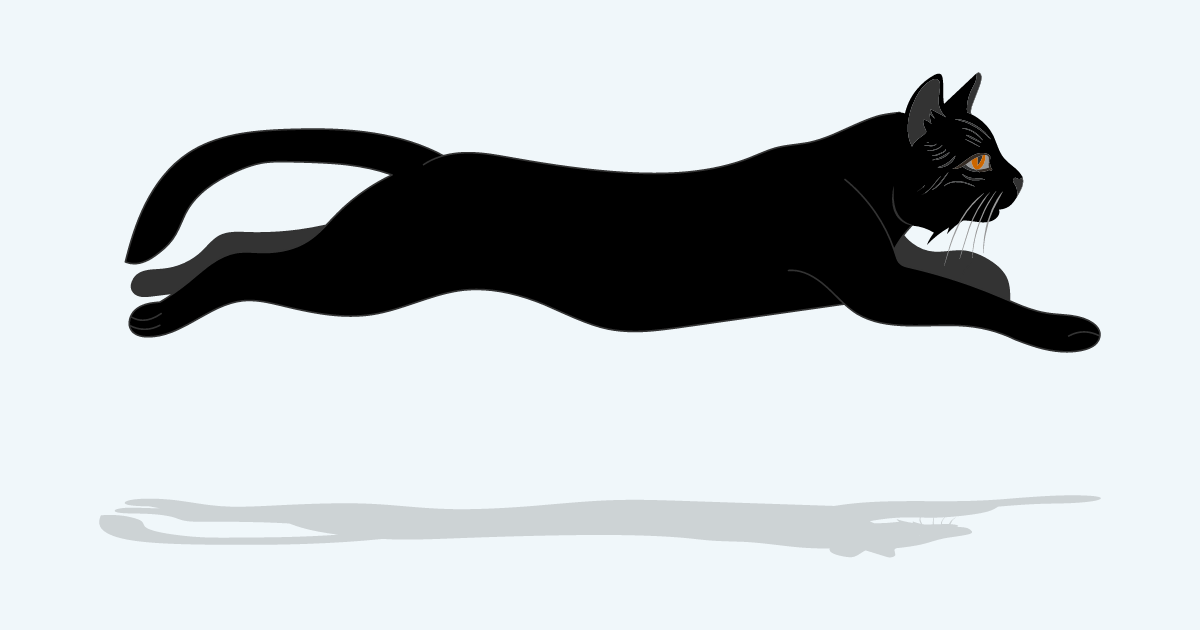

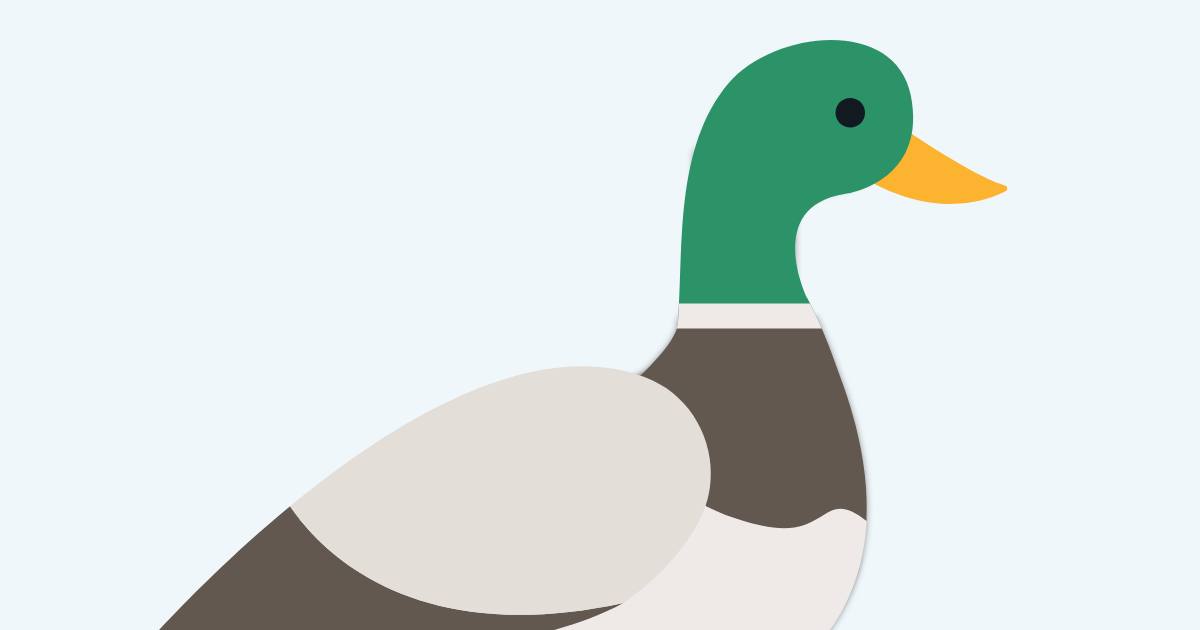

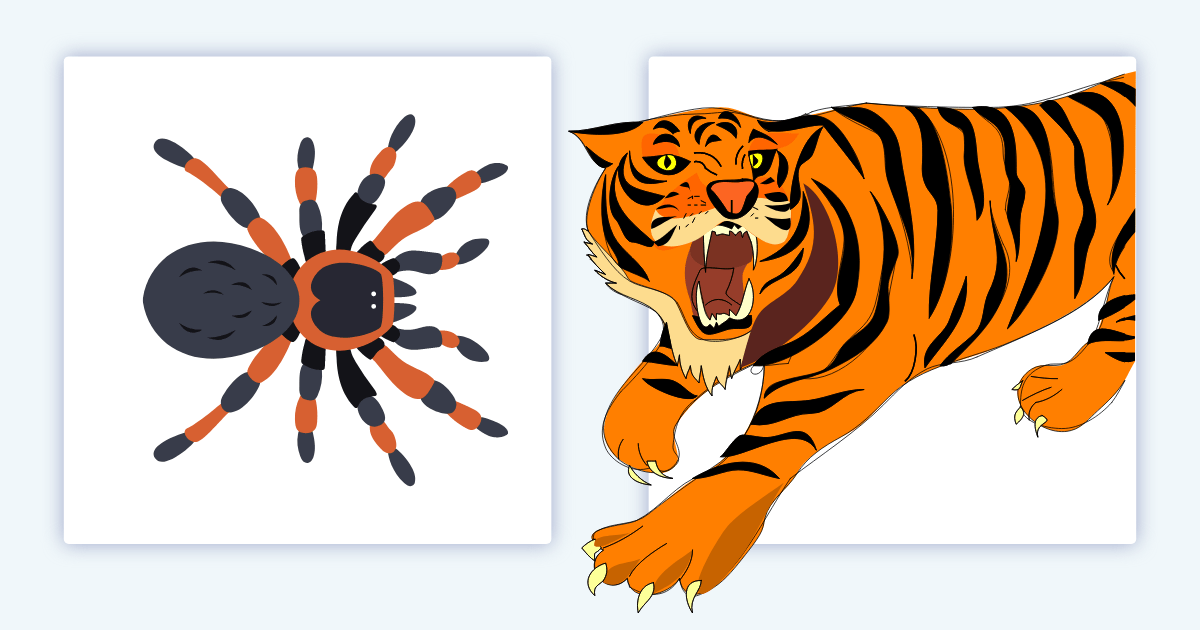

Animals

If you want to go viral, you just need cute cats.

Seriously. Our ancestors needed to detect animals for survival:

Information about non-human animals was of critical importance to our foraging ancestors. Non-human animals were predators on humans; food when they strayed close enough to be worth pursuing; dangers when surprised or threatened by virtue of their venom, horns, claws, mass, strength, or propensity to charge (New, Cosmides, & Tooby, 2007, p. 16598)

They developed brain regions that detected animals in their periphery. And modern humans inherited those mechanisms. Today, animals capture attention.

Your brain looks for geometric patterns:

The monitoring system responsible appears to be category driven, that is, it is automatically activated by any target the visual recognition system has categorized as an animal. (Cosmides & Tooby, 2013, p. 206)

As you’ll see later, some animals capture more attention than others.

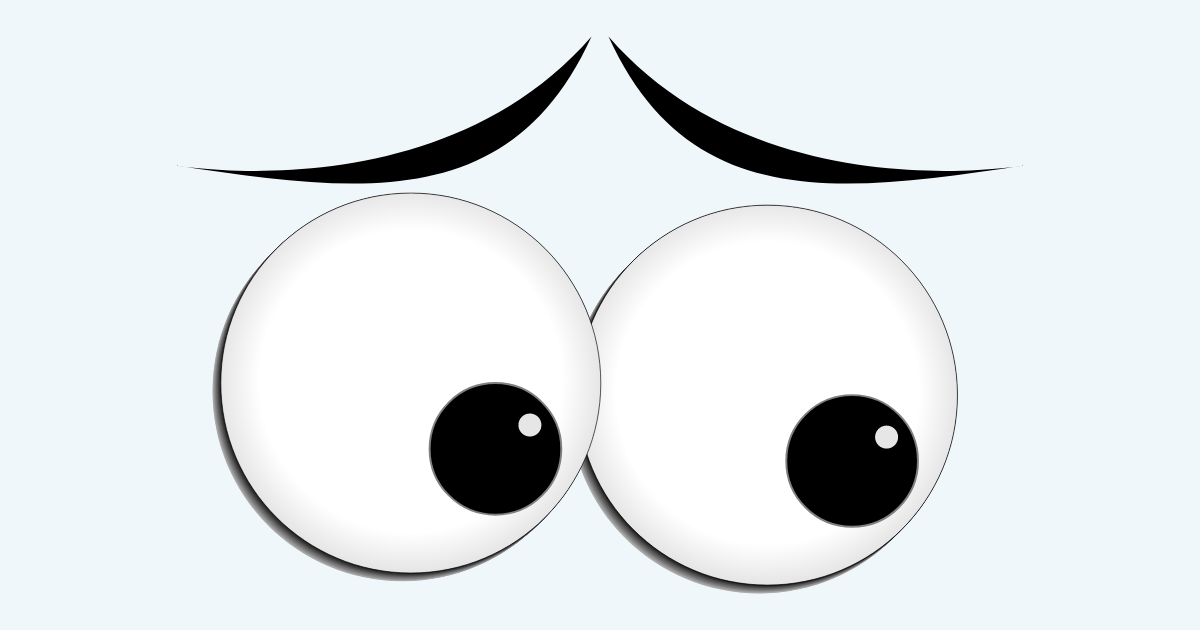

4. Spatial Cues

Eye Gaze

Eye gaze captures attention automatically.

Sure, it helped us locate objects and people (Emery, 2000). But there’s another reason: social dominance.

Each society, including animals, has a dominance hierarchy (Chance, 1967). Some creatures are more important than others. In order to survive, our ancestors needed to understand their position in this hierarchy. And they needed to identify the most dominant creature.

How? They relied on social attention.

Everyone in a society looks at the most dominant creature more often.

Ancestors who failed to notice these gazes (and thus identify the most dominant creature) would have picked a fight with the wrong beast. And they died.

Whoops.

In particular we developed two mechanisms:

- We developed the ability to detect eyes more easily. Gaze following became “hard-wired” in our brain via the superior temporal sulcus, amygdala, and orbitofrontal cortex (Emery, 2000)

- Our eyes became more salient. Indeed, “the physical structure of the eye may have evolved in such a way that eye direction is particularly easy for our visual systems to perceive.” (Langton, Watt, & Bruce, 2000, p. 52)

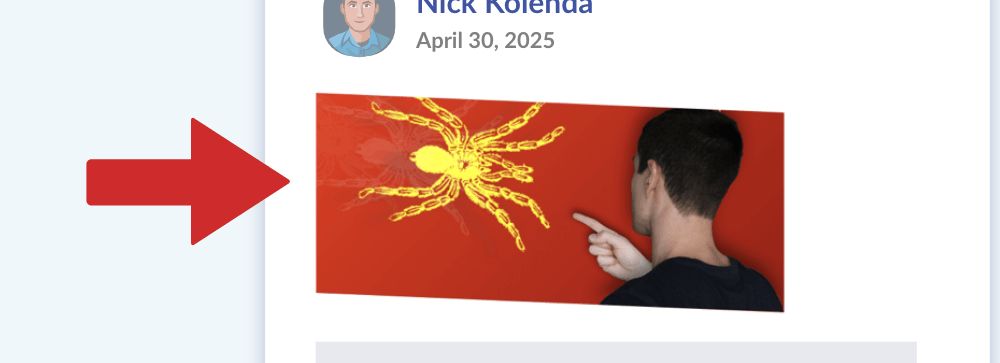

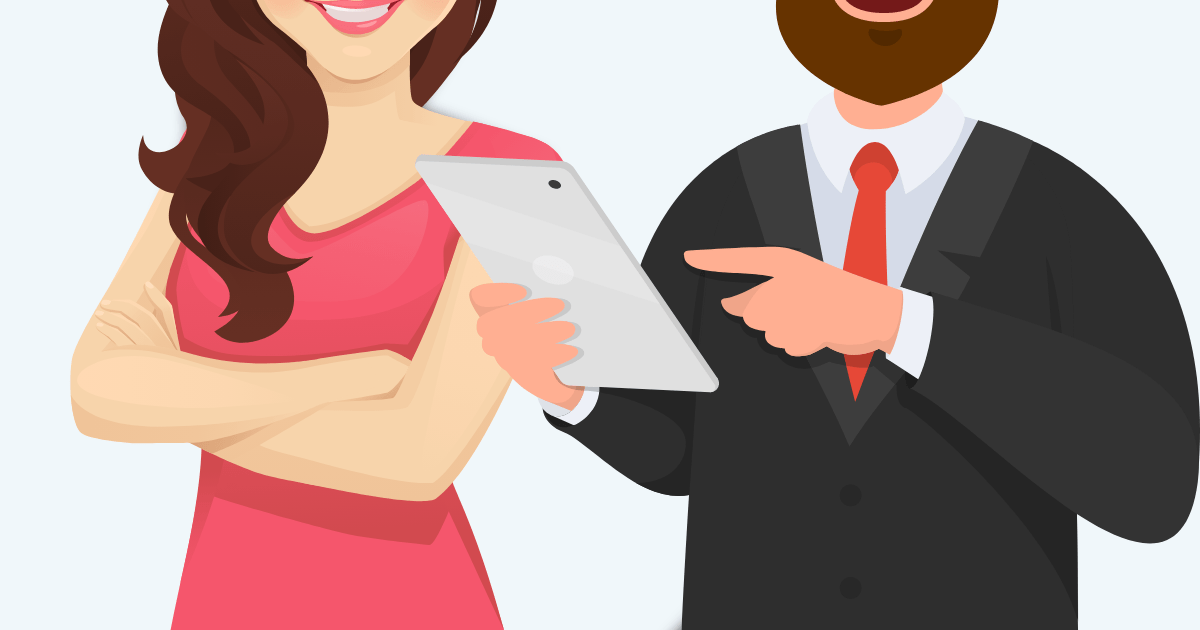

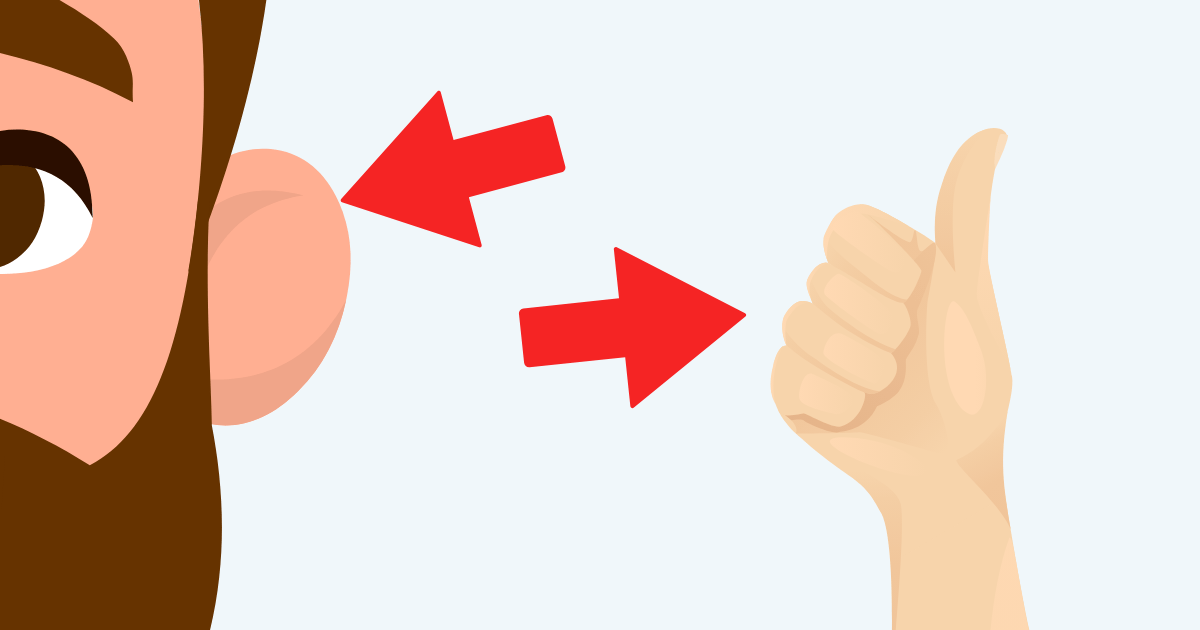

Body Orientation

Bodies can imply the direction of gaze, too.

This effect is additive with eye gaze (Langton & Bruce, 2000).

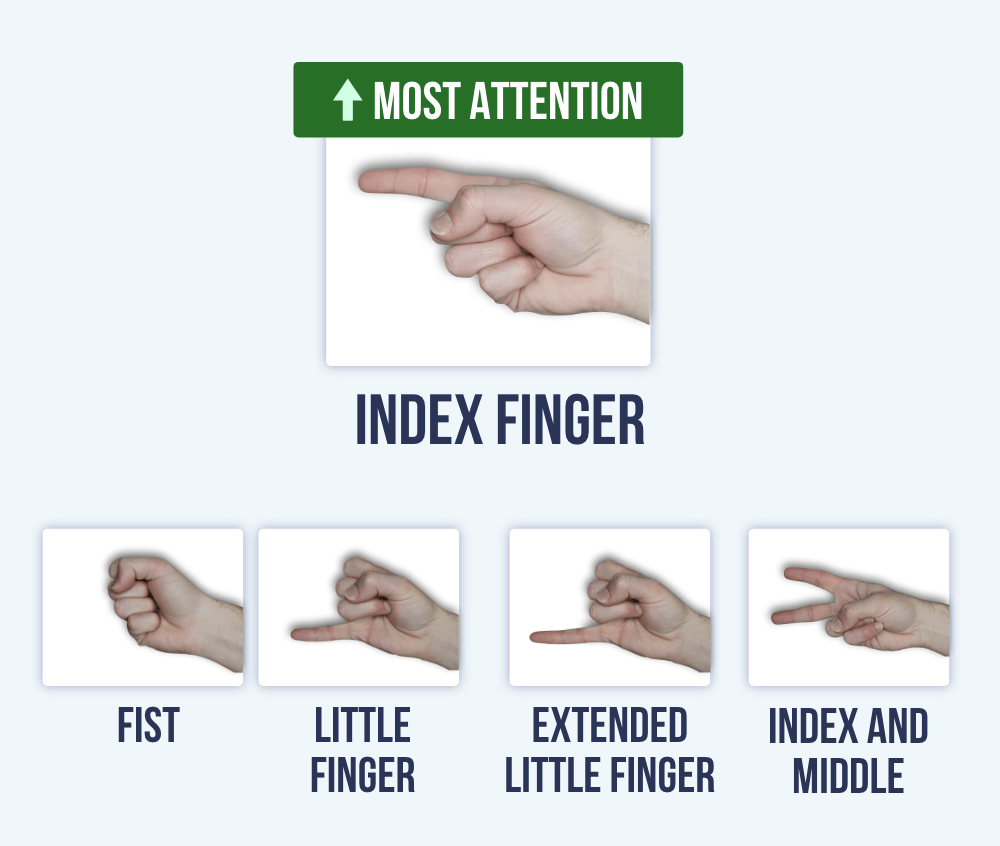

Pointing

Pointing captures attention, too.

Researchers compared multiple gestures —turns out, an isolated index finger captured that most attention (Ariga & Watanabe, 2009).

Why the index finger? I suspect it's the ease and accuracy.

The index finger has only one adjacent finger, so we can extend this finger quickly.

The pinky also has a single adjacent finger, but the index finger is longer — and thus more accurate for pointing.

Fast forward to today, parents are teaching their kids by pointing. Enough exposures will instill an automatic response. When we see a pointing gesture, we instinctively look.

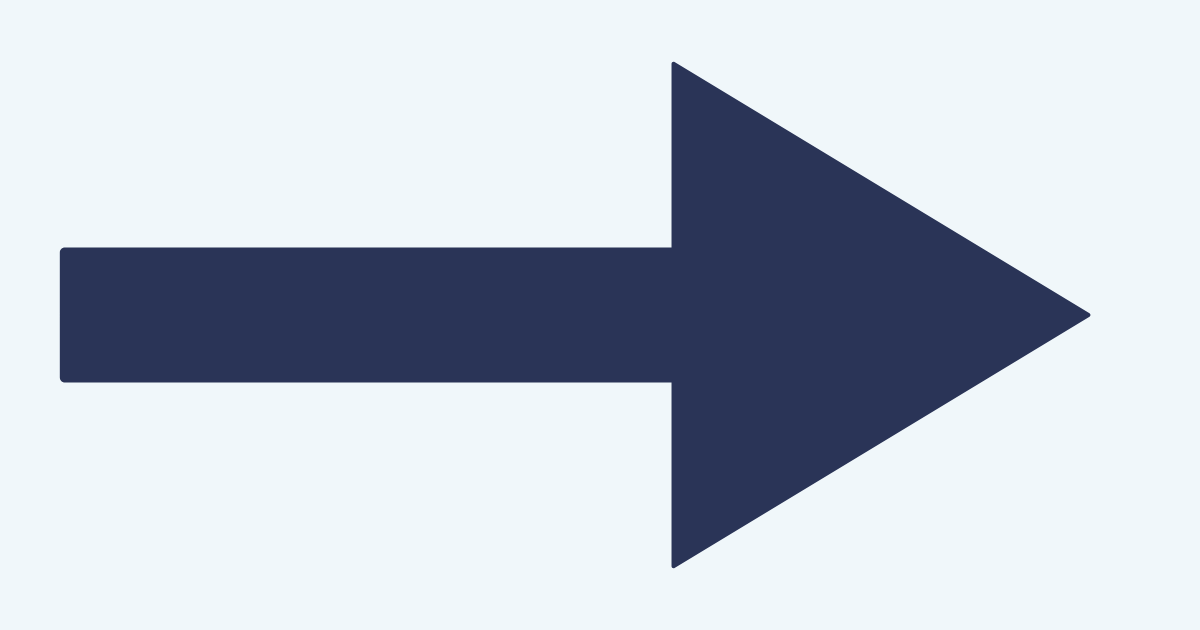

If that explanation is correct, then other spatial cues (e.g., arrows) should capture attention, too.

Arrows

Indeed, arrows capture attention, too (Ristic & Kingstone, 2006).

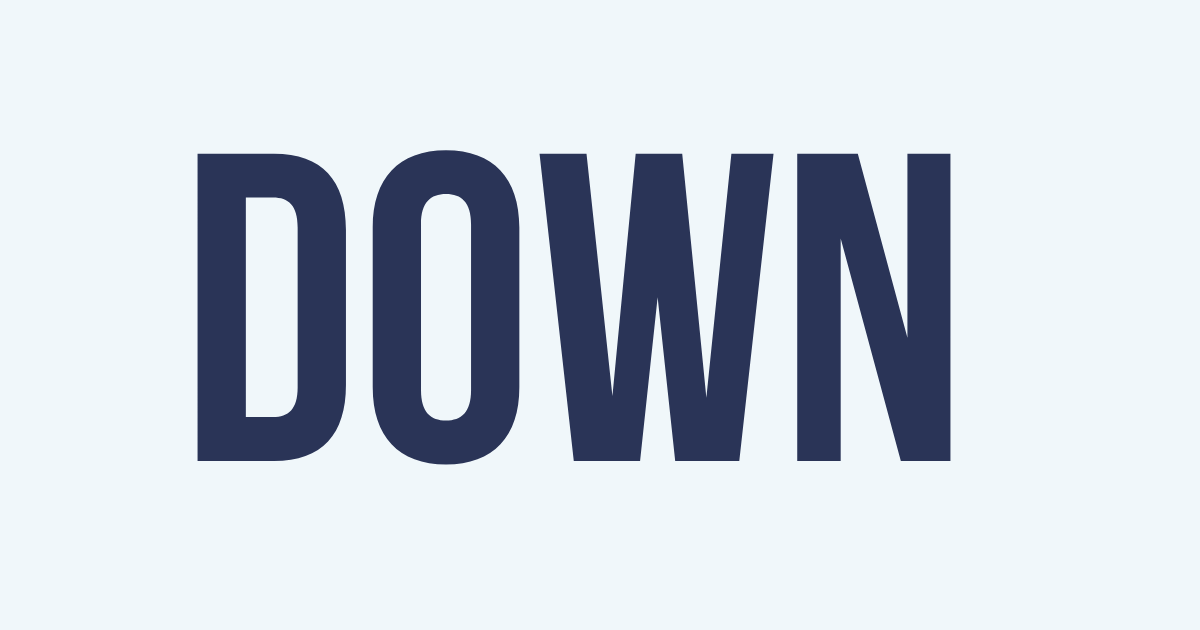

Directional Words

Spatial words capture attention (Hommel, Pratt, Colzato, & Godijn, 2001).

Don’t ask people to submit the yellow form. Some people are colorblind. Instead, ask them to submit the yellow form below.

5. High Arousal

Threat

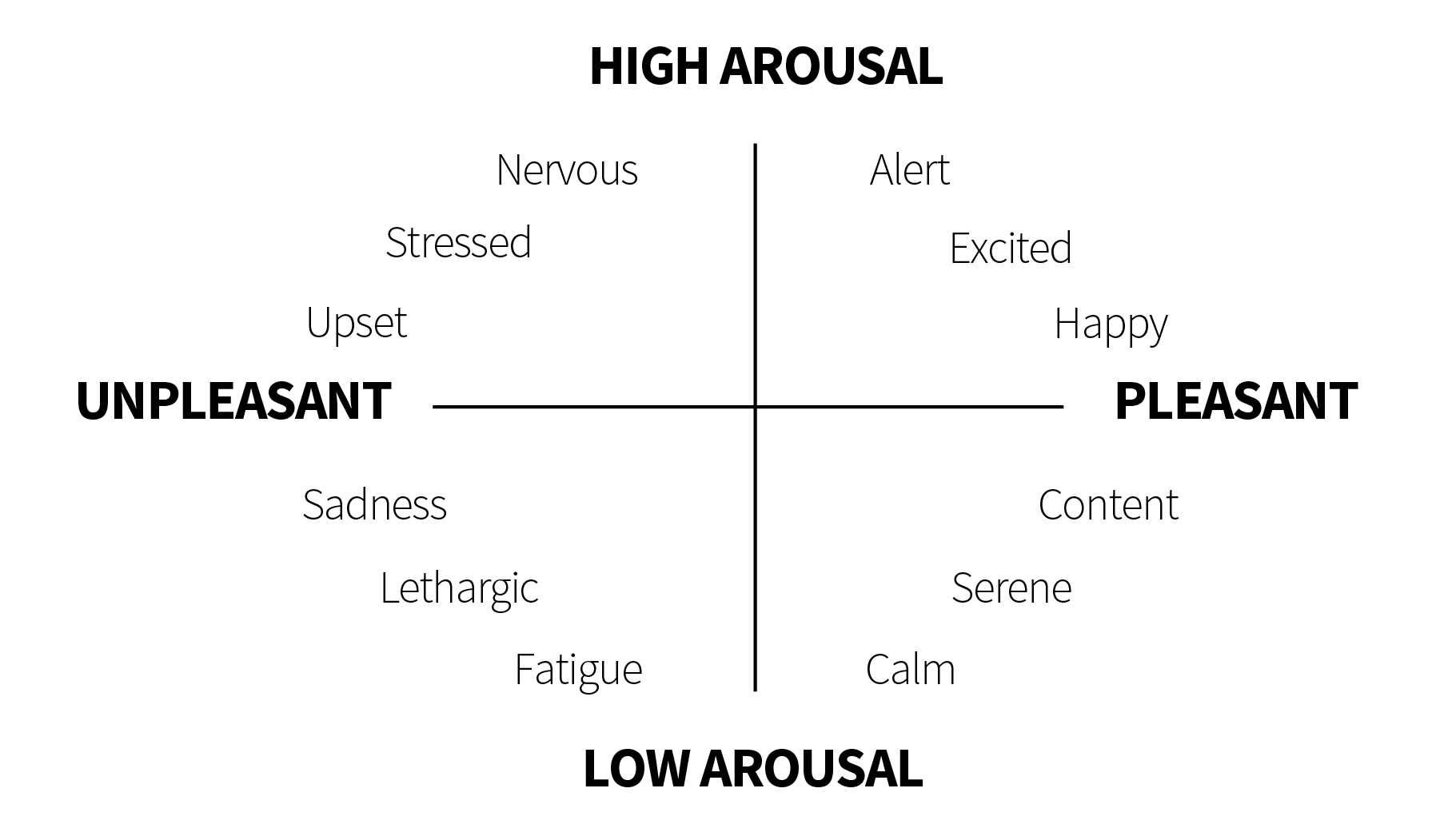

Emotion has two dimensions: arousal and valence (Barrett & Russell, 1999):

- Arousal: Degree of activation

- Valence: Degree of pleasantness

High arousal emotions capture attention (Anderson, 2005).

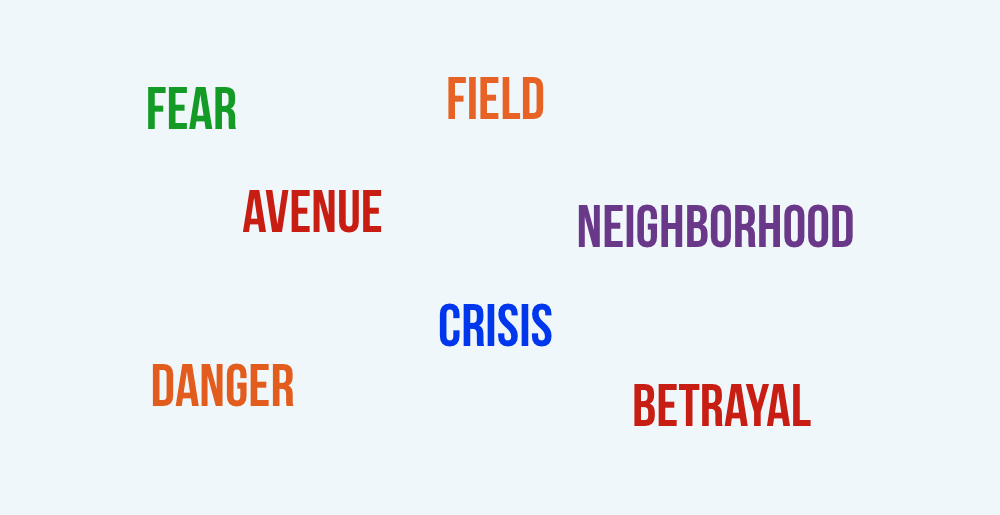

For example, below are random words. Don’t read them. Just mentally say the color of the text:

Turns out, we’re slower to name a color if the word is emotional (e.g., fear) because those words capture more attention (Algom, Chajut, & Lev, 2004).

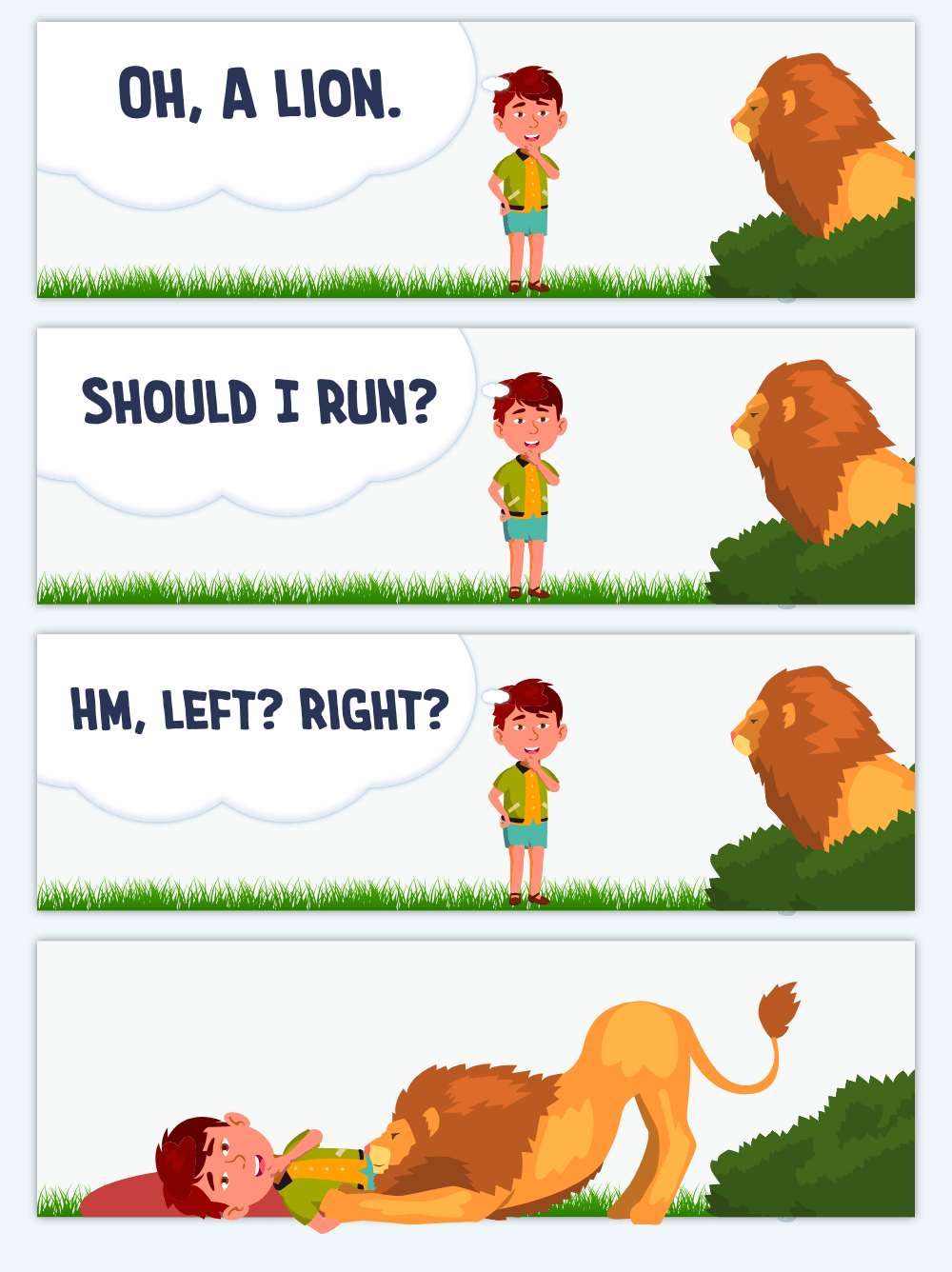

Threats grab attention, too. Even before we consciously notice them (Öhman & Mineka, 2001).

And that’s great. Imagine if we stopped to evaluate threats:

Your attention system evolved across thousands of years. There’s a reason why so many people are afraid of snakes and reptiles — even though we rarely see them today:

...the predatory defense system has its evolutionary origin in a prototypical fear of reptiles in early mammals who were targets for predation by the then dominant dinosaurs. (Öhman & Mineka, 2001, p. 486)

Your brain doesn’t detect the snake itself — it detects the curvilinear shape (LoBue, 2014).

Same with spiders:

...the reflexive capture of attention and awareness by spiders does not even require their categorization as animals. Performance was often comparable between identifiable spiders and stimuli which technically conformed to the spider template but that were otherwise categorically ambiguous (rectilinear spiders; New & German, 2015, p. 21)

Sex

Our ancestors reproduced if they found a mating partner. Sexual stimuli are hard-wired into our attention system (Most et al., 2007).

6. Unexpectedness

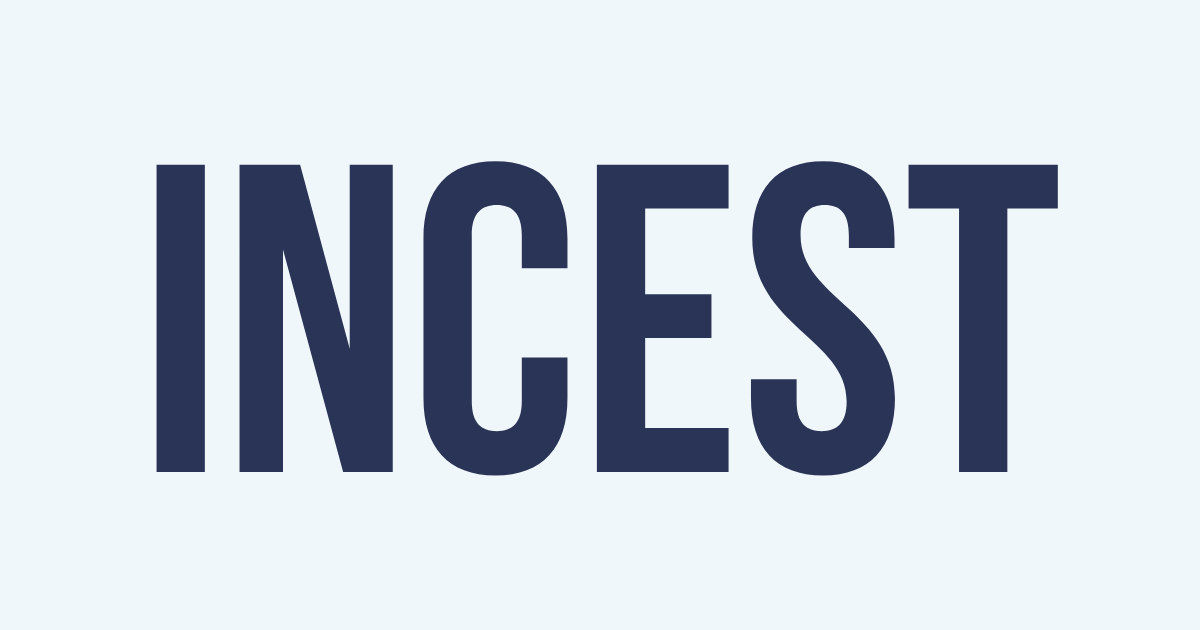

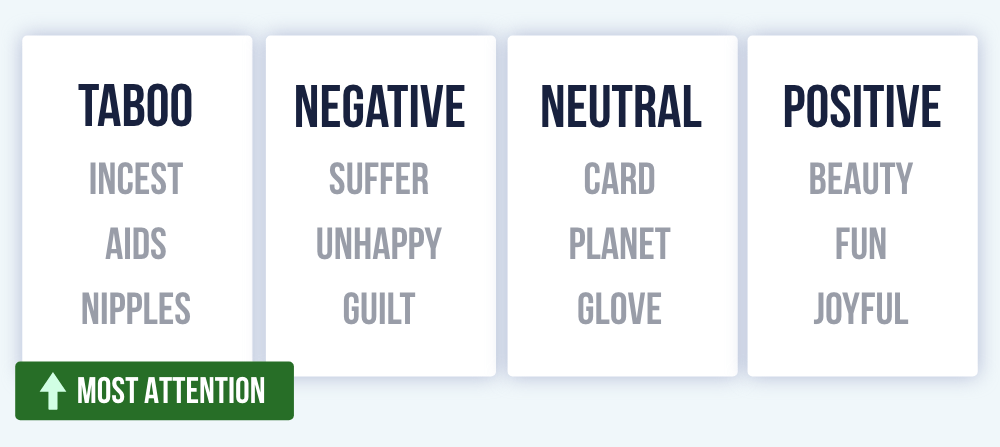

Taboo

Taboo words are attention-grabbing (Mathewson, Arnell, & Mansfield, 2008).

Some speakers (e.g., Tony Robbins) sustain the audience’s attention with profanity.

Novelty

Infants look at novel patterns more than familiar patterns (Fantz, 1964).

...novel popout would appear to have a great deal of survival value because it would allow organisms to quickly perceive and prepare to deal with novel intrusions into their familiar surroundings. (Johnston et al., 1990, p. 3)

Try the pique technique: Researchers received more money when they asked for an unusual amount (e.g., 37 cents), rather than a standard amount (e.g., 25 cents, 50 cents; Santos, Leve, & Pratkanis, 1994).

Novelty prevents a mindless refusal.

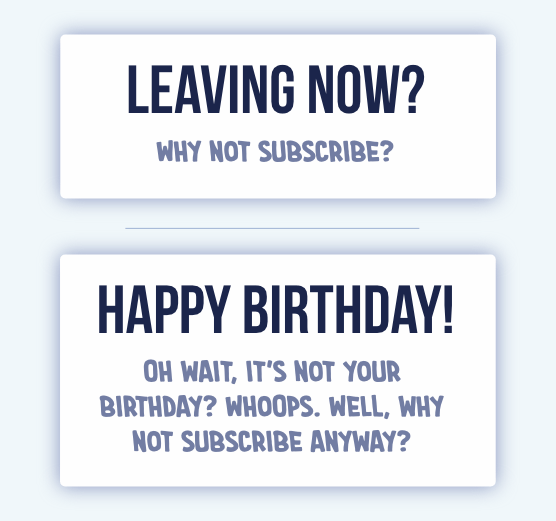

But any novelty can work. Some websites show a popup as people are leaving. Perhap you could make it more novel:

7. Self-Relevance

Your Name

You probably experienced the cocktail party effect: If someone nearby mentions your name, you wake up (Moray, 1959).

Hearing our name activates the medial prefrontal cortex (Perrin et al., 2005). Babies develop that ability at roughly 4.5 months (Mandel, Jusczyk, & Pisoni, 1995).

It also happens with subliminal exposures to our written name (Alexopoulos et al., 2012).

But be cautious with personalization:

Participants reported being more likely to notice ads with their photo, holiday destination, and name, but also increasing levels of discomfort with increasing personalization. (Malheiros et al., 2012, p. 1)

Researchers don’t have a name for it. Maybe we could call it the how-the-f*ck-did-they-know-that effect.

Your Face

Faces are equally as powerful as names (Tacikowski & Nowicka, 2010).

A complex bilateral network, involving frontal, parietal and occipital areas, appears to be associated with self-face recognition, with a particularly high implication of the right hemisphere. (Devue & Brédart, 2011, p. 2)

Sell clothing online? Perhaps you could create an interactive fitting room. Let users upload their picture to see how the clothing looks on them.

8. Goal-Relevant

No Goal

People are more likely to notice stimuli when they don’t have an active goal. Their cognitive load is lower, which leaves spare room for attention (Cartwright- Finch & Lavie, 2007).

For example, shoppers are less likely to notice banner ads when searching for specific products (Resnick & Albert, 2014). Browsing shoppers are more likely to notice them.

Capture attention by advertising in contexts with low cognitive load.

Goal-Directed

When you search for a blue stimulus, you don’t notice red stimuli (see Baluch & Itti, 2011).

Want people to notice your stimulus? Make it similar to whatever they are monitoring.

Clever advertisers will hire a celebrity for a commercial, then air this commercial during their TV show. While waiting for the show to return from a commercial, viewers are subconsciously monitoring for the actor's voice. Hearing this voice will snap their attention to the TV.

- Abrams, R. A., & Christ, S. E. (2003). Motion onset captures attention. Psychological Science, 14(5), 427-432.

- Alexopoulos, T., Muller, D., Ric, F., & Marendaz, C. (2012). I, me, mine: Automatic attentional capture by self‐related stimuli. European Journal of Social Psychology, 42(6), 770-779.

- Allison, T., Puce, A., & McCarthy, G. (2000). Social perception from visual cues: role of the STS region. Trends in cognitive sciences, 4(7), 267-278.

- Algom, D., Chajut, E., & Lev, S. (2004). A rational look at the emotional stroop phenomenon: a generic slowdown, not a stroop effect. Journal of experimental psychology: General, 133(3), 323.

- Anderson, A. K. (2005). Affective influences on the attentional dynamics supporting awareness. Journal of experimental psychology: General, 134(2), 258.

- Ariga, A., & Watanabe, K. (2009). What is special about the index finger?: The index finger advantage in manipulating reflexive attentional shift 1. Japanese Psychological Research, 51(4), 258-265.

- Aronoff, J. (2006). How we recognize angry and happy emotion in people, places, and things. Cross-cultural research, 40(1), 83-105.

- Baluch, F., & Itti, L. (2011). Mechanisms of top-down attention. Trends in neurosciences, 34(4), 210-224.

- Barrett, L. F., & Russell, J. A. (1999). The structure of current affect: Controversies and emerging consensus. Current directions in psychological science, 8(1), 10-14.

- Bindemann, M., Scheepers, C., Ferguson, H. J., & Burton, A. M. (2010). Face, body, and center of gravity mediate person detection in natural scenes. Journal of Experimental Psychology: Human Perception and Performance, 36(6), 1477.

- Cian, L., Krishna, A., & Elder, R. S. (2015). A sign of things to come: behavioral change through dynamic iconography. Journal of Consumer Research, 41(6), 1426-1446.

- Chance, M. R. (1967). Attention structure as the basis of primate rank orders. Man, 2(4), 503-518.

- Cosmides, L., & Tooby, J. (2013). Evolutionary psychology: New perspectives on cognition and motivation. Annual review of psychology, 64, 201-229.

- Cartwright-Finch, U., & Lavie, N. (2007). The role of perceptual load in inattentional blindness. Cognition, 102(3), 321-340.

- Desimone, R., Albright, T. D., Gross, C. G., & Bruce, C. (1984). Stimulus-selective properties of inferior temporal neurons in the macaque. Journal of Neuroscience, 4(8), 2051-2062.

- Devue, C., & Brédart, S. (2011). The neural correlates of visual self-recognition. Consciousness and cognition, 20(1), 40-51.

- Downing, P. E., Jiang, Y., Shuman, M., & Kanwisher, N. (2001). A cortical area selective for visual processing of the human body. Science, 293(5539), 2470-2473.

- Downing, P. E., Bray, D., Rogers, J., & Childs, C. (2004). Bodies capture attention when nothing is expected. Cognition, 93(1), B27-B38.

- Eastwood, J. D., Smilek, D., & Merikle, P. M. (2003). Negative facial expression captures attention and disrupts performance. Perception & psychophysics, 65(3), 352-358.

- Emery, N. J. (2000). The eyes have it: the neuroethology, function and evolution of social gaze. Neuroscience & biobehavioral reviews, 24(6), 581-604.

- Epstein, R. A., Higgins, J. S., Parker, W., Aguirre, G. K., & Cooperman, S. (2006). Cortical correlates of face and scene inversion: a comparison. Neuropsychologia, 44(7), 1145-1158.

- Fantz, R. L. (1964). Visual experience in infants: Decreased attention to familiar patterns relative to novel ones. Science, 146(3644), 668-670.

- Franconeri, S. L., & Simons, D. J. (2005). The dynamic events that capture visual attention: A reply to Abrams and Christ (2005). Perception & psychophysics, 67(6), 962-966.

- Hommel, B., Pratt, J., Colzato, L., & Godijn, R. (2001). Symbolic control of visual attention. Psychological science, 12(5), 360-365.

- Huang, L., & Pashler, H. (2005). Attention capacity and task difficulty in visual search. Cognition, 94(3), B101-B111.

- Johnston, W. A., Hawley, K. J., Plewe, S. H., Elliott, J. M., & DeWitt, M. J. (1990). Attention capture by novel stimuli. Journal of Experimental Psychology: General, 119(4), 397.

- Kaiser, M. D., Shiffrar, M., & Pelphrey, K. A. (2012). Socially tuned: Brain responses differentiating human and animal motion. Social neuroscience, 7(3), 301-310.

- Langton, S. R., Watt, R. J., & Bruce, V. (2000). Do the eyes have it? Cues to the direction of social attention. Trends in cognitive sciences, 4(2), 50-59.

- Larson, C. L., Aronoff, J., & Stearns, J. J. (2007). The shape of threat: Simple geometric forms evoke rapid and sustained capture of attention. Emotion, 7(3), 526.

- Langton, S. R., & Bruce, V. (2000). You must see the point: automatic processing of cues to the direction of social attention. Journal of Experimental Psychology: Human Perception and Performance, 26(2), 747.

- LoBue, V. (2014). Deconstructing the snake: The relative roles of perception, cognition, and emotion on threat detection. Emotion, 14(4), 701.

- Malheiros, M., Jennett, C., Patel, S., Brostoff, S., & Sasse, M. A. (2012, May). Too close for comfort: A study of the effectiveness and acceptability of rich-media personalized advertising. (pp. 579-588).

- Mandel, D. R., Jusczyk, P. W., & Pisoni, D. B. (1995). Infants' recognition of the sound patterns of their own names. Psychological science, 6(5), 314-317.

- Mathewson, K. J., Arnell, K. M., & Mansfield, C. A. (2008). Capturing and holding attention: The impact of emotional words in rapid serial visual presentation. Memory & Cognition, 36(1), 182-200.

- Moran, J., & Desimone, R. (1985). Selective attention gates visual processing in the extrastriate cortex. Science, 229(4715), 782-784.

- Milosavljevic, M., & Cerf, M. (2008). First attention then intention: Insights from computational neuroscience of vision. International Journal of advertising, 27(3), 381-398.

- Moray, N. (1959). Attention in dichotic listening: Affective cues and the influence of instructions. Quarterly journal of experimental psychology, 11(1), 56-60.

- Most, S. B., Smith, S. D., Cooter, A. B., Levy, B. N., & Zald, D. H. (2007). The naked truth: Positive, arousing distractors impair rapid target perception. Cognition and Emotion, 21(5), 964-981.

- New, J. J., & German, T. C. (2015). Spiders at the cocktail party: An ancestral threat that surmounts inattentional blindness. Evolution and Human Behavior, 36(3), 165-173.

- New, J., Cosmides, L., & Tooby, J. (2007). Category-specific attention for animals reflects ancestral priorities, not expertise. Proceedings of the National Academy of Sciences, 104(42), 16598-16603.

- Nothdurft, H. C. (2000). Salience from feature contrast: additivity across dimensions. Vision research, 40(10-12), 1183-1201.

- Öhman, A., & Mineka, S. (2001). Fears, phobias, and preparedness: toward an evolved module of fear and fear learning. Psychological review, 108(3), 483.

- Öhman, A., Flykt, A., & Esteves, F. (2001). Emotion drives attention: detecting the snake in the grass. Journal of experimental psychology: general, 130(3), 466.

- Peelen, M. V., & Downing, P. E. (2007). The neural basis of visual body perception. Nature reviews neuroscience, 8(8), 636-648.

- Pratt, J., Radulescu, P. V., Guo, R. M., & Abrams, R. A. (2010). It’s alive! Animate motion captures visual attention. Psychological science, 21(11), 1724-1730.

- Puce, A., Allison, T., Asgari, M., Gore, J. C., & McCarthy, G. (1996). Differential sensitivity of human visual cortex to faces, letterstrings, and textures: a functional magnetic resonance imaging study. Journal of neuroscience, 16(16), 5205-5215.

- Regan, B. C., Julliot, C., Simmen, B., Viénot, F., Charles–Dominique, P., & Mollon, J. D. (2001). Fruits, foliage and the evolution of primate colour vision. Philosophical Transactions of the Royal Society of London, 356(1407), 229-283.

- Resnick, M., & Albert, W. (2014). The impact of advertising location and user task on the emergence of banner ad blindness: An eye-tracking study. International Journal of Human-Computer Interaction, 30(3), 206-219.

- Ristic, J., & Kingstone, A. (2006). Attention to arrows: Pointing to a new direction. Quarterly journal of experimental psychology, 59(11), 1921-1930.

- Ro, T., Russell, C., & Lavie, N. (2001). Changing faces: A detection advantage in the flicker paradigm. Psychological science, 12(1), 94-99.

- Santos, M. D., Leve, C., & Pratkanis, A. R. (1994). Hey Buddy, Can You Spare Seventeen Cents? Mindful Persuasion and the Pique Technique 1. Journal of Applied Social Psychology, 24(9), 755-764.

- Tacikowski, P., & Nowicka, A. (2010). Allocation of attention to self-name and self-face: An ERP study. Biological psychology, 84(2), 318-324.

- Treisman, A., & Gormican, S. (1988). Feature analysis in early vision: evidence from search asymmetries. Psychological review, 95(1), 15.

- Troje, N. F., & Basbaum, A. (2008). Biological motion perception. The senses: A comprehensive reference, 2, 231-238.

- Vallortigara, G., Regolin, L., & Marconato, F. (2005). Visually inexperienced chicks exhibit spontaneous preference for biological motion patterns. PLoS biology, 3(7), e208.